Robotic system offers independence to individuals who need help with eating

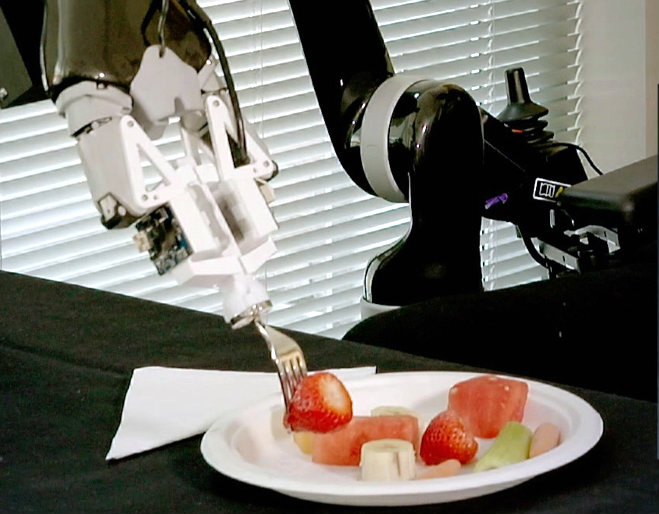

About a million Americans with injury or age-related disabilities need someone to help them eat. Now NIBIB funded engineers have taught a robot the strategies needed to pick up food with a fork and gingerly deliver it to a person’s mouth.

Siddhartha Srinivasa, Ph.D., the Boeing Endowed Professor at the School of Computer Science and Engineering at the University of Washington, is known as a passionate roboticist who builds complete robotic systems that integrate perception, planning, and control to perform practical functions in the real world. Currently, Srinivasa and his team have turned to helping the million or so individuals in the U.S. alone who need someone to help them eat.

Their development of a robot named ADA, which refers to its Assistive Dexterous Arm, is reported in the April Issue of IEEE Robotics and Automation Letters.1

Says Grace Peng, Ph.D., director of the NIBIB program in Mathematical Modeling, Simulation, and Analysis, “We have supported this group’s outstanding work developing systems for wheelchair control based on understanding the user’s intent. This current paper paints an excellent picture of the parameters that need to be considered from an engineering point of view to develop a feeding robot.”

Early in the design of ADA the engineers realized they had to start from the ground up. In this case ground zero was skewering pieces of food onto a fork. They began by watching, measuring, and cataloguing how people do it. Not entirely surprising to trained engineers, different skewering strategies were employed based on the size, shape, stiffness, pliability, and other physical properties of foods that included strawberries, banana pieces, melon cubes, strips of celery, and baby carrots.

The team used the data collected on the strategies people use to eat different foods to program ADA to accurately identify each item on a plate, and then perform the optimal movements that result in successfully skewering each item and delivering it to the recipient’s mouth. For example, unlike a strawberry, which is sturdier, the softness of a piece of banana required skewering at an angle to avoid the piece simply sliding off the fork.

Strips of celery required a specific approach for both skewering and delivering the food to the mouth properly. The robot was taught to stick the fork into one end of the strip, and then lift and turn the piece so that the opposite end of the celery, clear of the fork’s sharp tines, was cleanly presented to the recipient.

The group’s work is aimed at helping people who are unable to perform essential tasks live more independently. Says Srinivasa, ”We think our technologies can help those dependent on a caregiver to feed them every day to regain some independence and control over their lives.”

In addition to that important goal, Srinivasa points out that ADA can also be a help to often overtaxed caregivers, who, in this case could set up the food and robot and then attend to other tasks or focus on socializing with the clients. “In this way we see ADA as a win-win for caregivers and their clients that will ultimately improve the experience for everyone involved—especially as the country’s population ages and the need to optimize strategies for their care increases.”

Prior to publication of the research team’s results in April, the development of ADA won the Best Demo Award at the Neural Information Processing Systems meeting in December 2018, and the Best Tech Paper Award at the joint Association for Computing Machinery / Institute of Electrical and Electronics Engineers International Conference on Human Robot Interaction in March 2019.2

The work was supported by Grant #R01EB019335 from the National Institute of Biomedical Imaging and Bioengineering (NIBIB), the National Science Foundation, the Office of Naval Research, Amazon, and Honda.

1. Towards Robotic Feeding: Role of Haptics in Fork-Based Food Manipulation. Tapomayukh Bhattacharjee, Gilwoo Lee, Hanjun Song, and Siddhartha S. Srinivasa. IEEE Robotics and Automation Letters. Vol. 4, No. 2, April 2019.

2. Transfer depends on Acquisition: Analyzing Manipulation Strategies for Robotic Feeding. D. Gallenberger, T. Bhattacharjee, Y. Kim, and S.S. Srinivasa. ACM/IEEE International Conference on Human-Robot Interaction. 2019.