Working all the angles sharpens dimensions of confocal images

Confocal microscopes are invaluable in biomedical research, as they can produce three dimensional images of thick samples. However, these images are blurry along the third dimension, and get worse the thicker the sample. Now a team led by NIBIB scientists has significantly improved confocal imaging to construct higher resolution, 3D images of fine structures in living samples.

The work was led by NIH Senior Investigator Hari Shroff, PhD, in collaboration with NIH staff scientist Yicong Wu, PhD, and visiting graduate student Xiaofei Han at the National Institute of Biomedical Imaging and Bioengineering. Additional collaborators included Patrick La Riviere at the University of Chicago, Arpita Upadhyaya and Sougata Roy at the University of Maryland, Daniel Colón-Ramos at Yale University, and industrial partners at Applied Scientific Instrumentation.

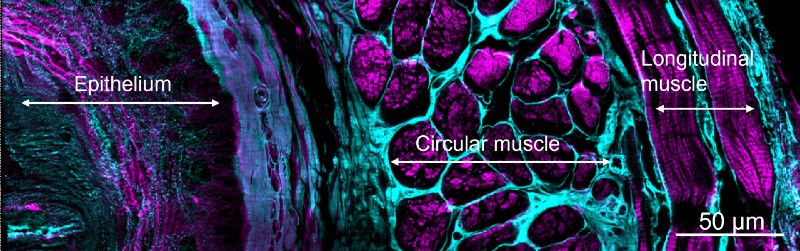

The team combined innovative hardware (multiview microscopes) and state-of-the-art software (deep learning techniques) to improve confocal microscopy in single cells, living worm embryos, and fly, mouse, and worm tissues.

“It was exciting to see several different computational tools we have developed together over the last few years come together in this paper,” said La Riviere, who has collaborated with Shroff since 2014, “some being redeployed after finding success in our previous computational microscopes and some being used for the first time in the face of these large, scattering samples.”

The major hardware advance created by Shroff and colleagues was construction of a line-scanning confocal microscope that views the sample from three directions, called triple view line scanning confocal microscopy. The researchers then used software to fuse all three views into a single high resolution 3D image, thereby improving overall resolution and performance in thick samples.

The “line scanning” part of the new confocal microscope refers to tightly focused lines that sequentially image the sample. Line scanning also allowed the researchers to achieve even higher resolution by isolating the fluorescence in the immediate vicinity of each line and using computer programs to knit together the resulting ‘super-resolution’ images from each view.

The team used a type of artificial intelligence (AI) called deep learning to compensate for trade-offs made when using low light levels. Although lower light levels reduce damage to the samples from the focused laser illumination (thus allowing longer imaging times), the lower light levels are less effective in resolving certain features. The team’s approach produces higher quality images by training deep learning algorithms to take multiple blurry, low-resolution images and transform them into a high-resolution image.

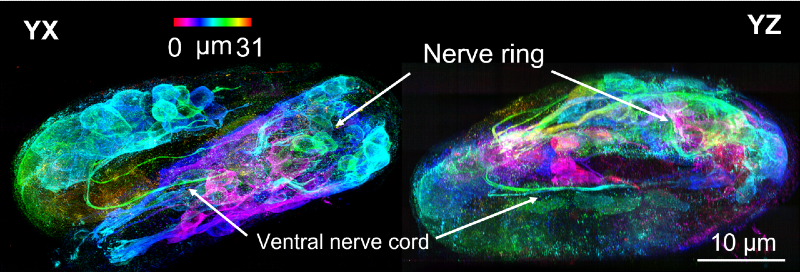

Using deep learning techniques they were able to obtain high resolution images of a wide range of biological specimens and processes, including the development of a roundworm (an organism routinely used to study developmental processes). The technique allowed imaging of the roundworm embryo as it progressed through development to finally hatch as a newborn worm, which included visualization of new nerve cells of the embryos as they began to “twitch” inside the eggshell.

These remarkable images also enabled more accurate counts of the number of cells in adult worms and worm embryos; visualization of fine signaling processes in developing fruit fly wings; and observations of the nanoscale dynamics of proteins within immune cells.

In their quest to find ways that artificial intelligence can help with their work the team also found that with enough training data they could teach the deep learning program to take a single confocal view—from a single direction—and predict what the sharper volume constructed from all three views should be.

Colón-Ramos sums up the significance of the efforts of the long-time collaboration. “We are excited to use these new and integrated approaches to probe deeper into the biological underpinnings of developing organisms, and understand them across scales and in living animals, from the organization of molecules and organelles inside cells, to the organization of cells inside tissues.”

Funding support: Intramural laboratories and extramural grants, NIH; Marine Biological Laboratory at Woods Hole, MA; and Gordon and Betty Moore Foundation. The work was published in Nature1.

1. Wu, Y., Han, X., Su, Y. et al. Multiview confocal super-resolution microscopy. Nature (2021). https://doi.org/10.1038/s41586-021-04110-0